Introduction

It’s a frontend application functionality, Once user start typing in a search field of a e-commerce, social media app for user search or a simple search app like google, bing.

- It doesn’t search fast rather help user frame sentence or think faster. Providing realtime suggestion from previously searched query or trending search or current context of search for the application platform.

- Behind the scene algorithm process to search result with relevance and ranking of search term.

- System should have very low latency as system need to compete with user typing speed.

Key Requirements:

- Low latency (instant suggestions).

- High fault tolerance (system should not break easily).

- Personalization (using past interactions and preferences).

Typeahead Design problem

Problem related to this topic might be asked as :

- How would you design a typeahead suggestion system that is capable of learning from user behavior—how would you incorporate real-time data processing and feedback loops?

- How does load balancer placement and configuration impact the typeahead system performance and user experience?

- How would you efficiently update sub-tree structures in a typeahead system to accommodate new data ingestion from various sources, such as web crawlers, etc.?

Functional Requirements

- System should suggest top 10 list of suggestion for user typed search query.

Non-functional requirements

- Low Latency

- System should show all the query suggesstion in realtime with low latency.

- Average user typing time between two keystroke time is 160ms

- If a user is typing fast he already know he want to type and our suggestion doesn’t need to help him.

- Our query response time should be little more than average time.

- Lets take response time as 200ms as it bit higher than 160ms

- Fault Tolerance

- Our system should perform well even if some of the system fail for certain reason.

- Scalability

- System should adoptively scale up as an when number of user grows on our platform and scale down as no of user reduced by certain thershold.

Resource Estimation

We need to design a system which can be scaled up to serve a platform like google. Google search has 3.5 billion query per day. Lets estimate storage and bandwidth for the system.

Storage Estimation

- DAU (Daily active user) 3.5 billion = 3.5 * 10^9

- Assuming that out of the 3.5 billion queries per day, 2 billion queries are unique and need to be stored.

- Let’s also assume that each query is of 15 characters on average of 2 Bytes of storage per character.

- Storage = 2 billion×15×2=60 GB to store all the queries made in a day.

- Storage required per year:

- 60 GB/day × 365 = 21.9 TB/year

Bandwidth Estimation

- 15×3.5 billion = 52.5 billion characters per day.

- Total read requests per second:

- 52.5B/86400≈0.607M characters/sec.

- Incoming Traffic – 0.607 million×2×8=9.7 Mb/sec

- Outgoing traffic of 10 suggestion – 10×9.7 Mb/sec=97 Mb/sec

Number of servers estimation

- 52.5 billion query/day

- Standard server capacity to handle 64000 QFPS

- 52.5 B/64000 ≈ 800 K Server

API Design

Get Suggestions

getSuggestions( -> [10 record]query)

Save trending queries to the database

addToDatabase(query)-> boolean

query: This represents a frequently searched query that crosses the predefined limit from different user.

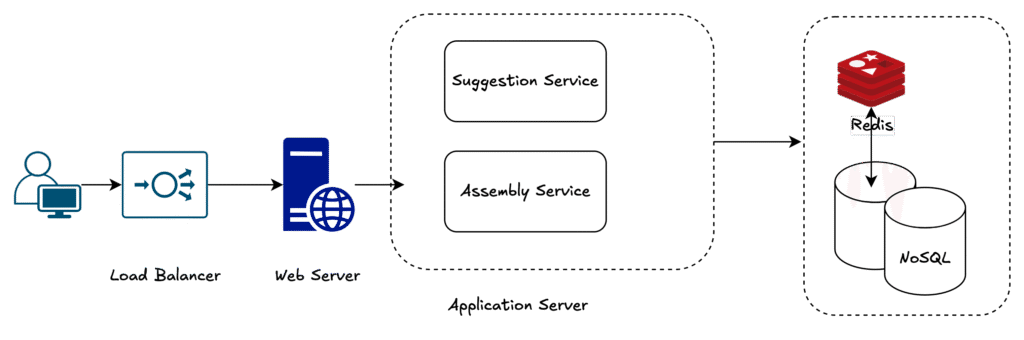

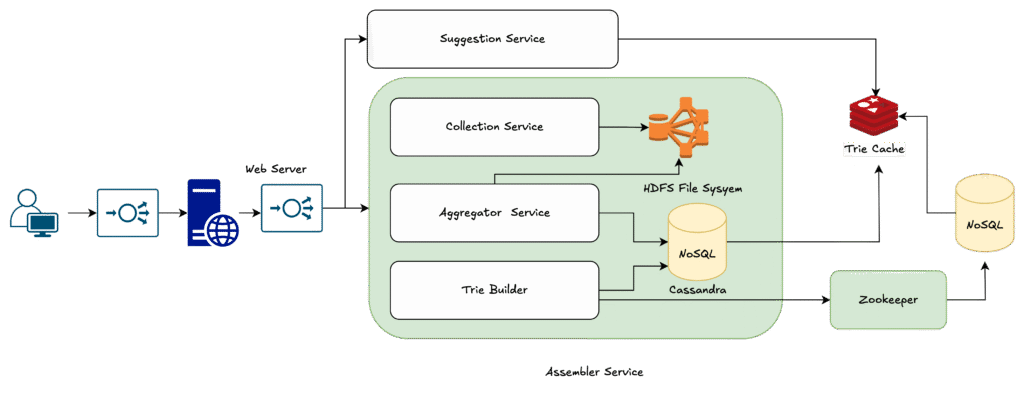

High Level Design

We need to perform two main functionality

- We need to return 10 suggestion when user type with low latency, So introduced suggestion service to fetch suggestions. To make query fast we can pull data from redis cache as the right fit which runs on RAM.

- We also need to rank and store trending query for further use , Assembly service can perform that operation separate to uninterupt suggestion service and store data in replicated node of NoSQL server

- With high level design we are able to fullfill our functional requirement.

Language Support

Q1. What’s the first step to support multiple languages?

- Detect the user’s selected language.

- Store and serve language-specific suggestions.

Q2. How to handle character sets and input size differences?

- Use UTF-8 encoding for all languages.

- Optimize indexes to handle multi-byte characters.

Q3. How should we store and retrieve language-specific suggestions?

- Keep separate indexes per language.

- Or shard data based on language for faster lookups.

Q4. How do language differences impact ranking and relevancy?

- Maintain per-language ranking models.

- Mix local popularity with global trends for balanced results.

Q5. Should we translate queries across languages?

- Pre-translate frequent queries to save latency.

- Runtime translate rare queries and cache them to reduce storage overhead.

Q6. How does load balancing work in a multilingual system?

- Use language-based server clusters or region-based traffic partitioning.

- Ensures no single server is overloaded.

Data Structure for Storing Prefixes/Query

Lets suppose user type Uni , and we have stored words like – United, Unique, Universe, Unified, Unilateral …

Either we can do a like search which will scan db and match search term field value and return top 10 result based on ranking column.

If we have a data structure which can make this decision fast , will im prove query performance.

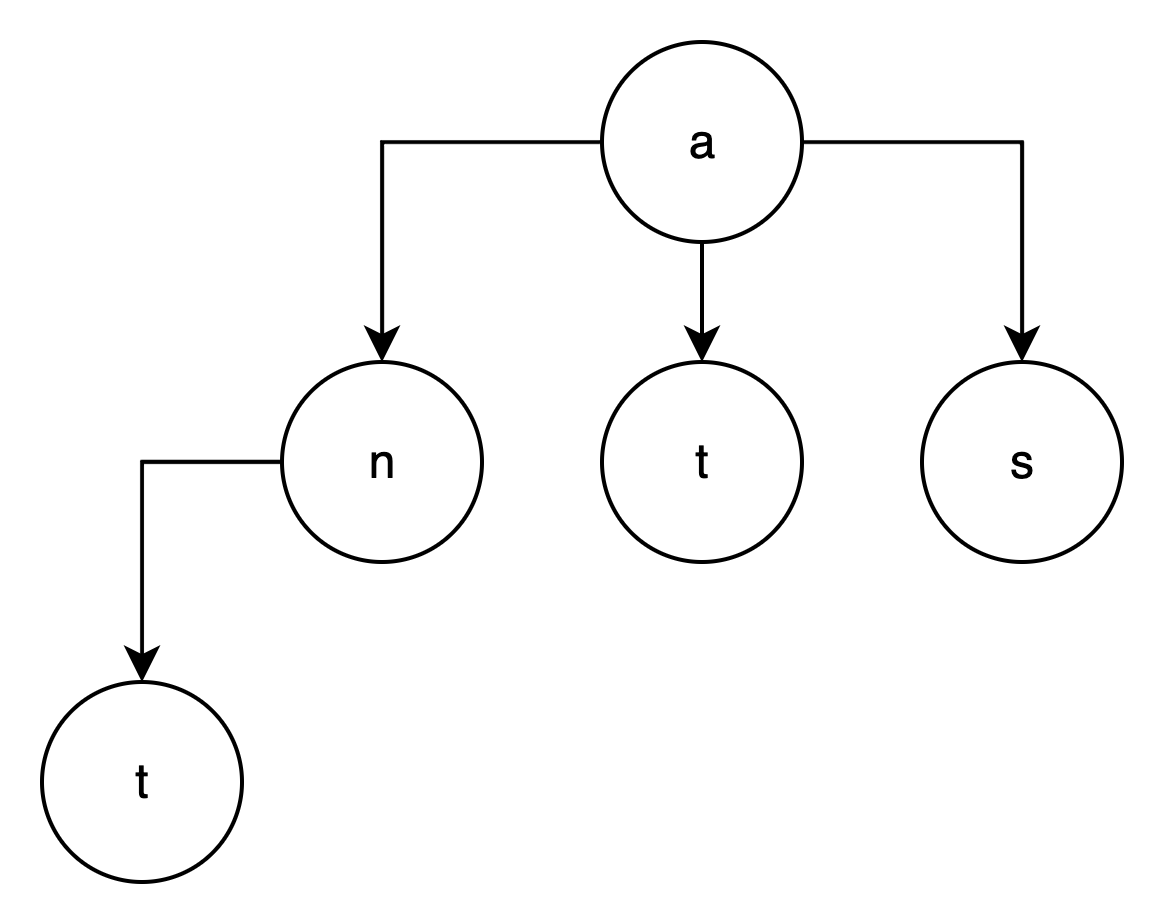

Trie is one of data structure which work like tree and store character as node and leaf as word formation from that char.

e.g A -> n, t, i, s so word formation like An, At, AI, As now further child nodes like A> n> t for Ant

The trie can combine nodes as one where only a single branch exists, which reduces the depth of the tree. This also reduces the traversal time, which in turn increases the efficiency. As an example, a space- and time-efficient model of the above trie is the following:

Track the top searches

Along with char/word we can also store count for ranking purpose. Let’s say that a user searches for UNITED 15 times, UNIQUE 20 times, UNIVERSAL 21 times, and UNIVERSITY 25 times

Trie partitioning

As one server might not fit for scaling purpose we can partition trie to distribute load, we can use char as partition key at each level

First we can let say have 26 server based on alphabet

- A -> Server 1

- B -> Server 2

- Z -> Server 26

We can also further break down if it grows like ab, ac, ad, ae and so on

And we can place a mapping service to hold mapping and route request accordiongly

Update the trie

Since billions of query happen in a day ranking and query must be updated frequently since our trie is running in ram and with larger size.

So we can perform update in 2 step

- Update offline data on new query and ranking

- After certain interval bring a new replication parallely

- Decommission old replication

Q. If the prefix frequencies keep increasing over time, the corresponding integers storing them can overflow. How can we manage this issue?

Ans: We can normalize frequencies by mapping them in a range, let’s say between zero and 1,000. Alternatively, we can stop any further additions after a certain threshold is reached, assuming that any prefix reaching that threshold is at the top of the rankings.

Detailed Design

The Assembler decouples trie updates from the query path by batching logs → aggregating frequencies → rebuilding tries periodically, ensuring low-latency suggestions + scalable updates.

- Collection Service → Collects raw user queries + metadata, stores in HDFS.

- Aggregator → Consolidates raw logs, counts prefix frequencies using MapReduce, stores results in Cassandra.

- Trie Builder → Uses aggregated data to build/update tries, stores them in MongoDB (persistent + recoverable).

Q. How would you handle misspelled or incomplete words in the Typeahead Suggestion System?

Handle misspelled/incomplete words by combining tries for prefix matches, fuzzy search for corrections, and frequency-based ranking, with caching to keep latency low.

Q. Should we collect data and build a trie per user, or should it be shared among all users?

Since we aim to design a system on a scale that’s similar to Google Search, billions of users will be using our service. Since the number of users would be huge, maintaining a tree for each user would become complex and time-consuming. There’s also a possibility of duplicated trees if several users have typed some common searches, resulting in resource wastage.

Therefore, our design assumes a common trie that’s shared among users, where the ranking would be based on single phrases in the trie and the frequency of the terms.

Validate Non Functional Requirements

The system is designed to meet the key non-functional requirements of low latency, fault tolerance, and scalability.

- Low Latency

- Trie depth reduction improves traversal speed.

- Offline updates ensure trie construction does not block user queries.

- Redis caching on top of NoSQL databases accelerates lookups.

- Geographic distribution of servers minimizes network delay.

- Partitioned tries distribute workload efficiently.

- Fault Tolerance

- Replication of tries and databases ensures resilience.

- Partitioning prevents single points of failure.

- Failover mechanisms allow continued operation during server crashes.

- Scalability

- New application servers can be added dynamically with increasing load.

- Trie partitions can scale horizontally with user traffic.

- NoSQL databases (Cassandra, MongoDB) handle large-scale distributed storage.

Client-Side Optimizations

- Debouncing keystrokes (e.g., >160 ms delay) to reduce unnecessary calls.

- Waiting for a minimum character threshold before firing queries.

- Local caching of recent history for quick re-suggestions.

- Pre-establishing connections (via WebSocket) for faster first response.

- Edge caching/CDNs reduce round-trip time further.

Personalization

- Suggestions customized per user based on history, location, and language.

- Personalized queries prioritized over global popular ones.

- Cached personal histories improve speed and user experience.

Summary

The system effectively pushes heavy processing offline (via assembler, trie updates, and aggregators), leverages caching + partitioning for speed, and uses replication + distributed databases for reliability. By combining server-side scalability with client-side optimizations and personalization, the design successfully meets its non-functional requirements of low latency, fault tolerance, and scalability while ensuring a smooth user experience.