Problem statement

For example, when content is uploaded to social media platforms like YouTube, Instagram, or Twitter, it can receive millions of likes, views, or retweets within just a few seconds, minutes, or hours. To handle this, counters must be updated efficiently.

A simple approach is to lock a row while persisting the data and release it after the update. But this creates several challenges:

- High concurrency – Millions of write operations occur in a very short span of time.

- Potential data loss – Some queries may get dropped if the system cannot process them fast enough.

- Write bottlenecks – APIs may need to wait (or block) until the write operation is complete, causing delays.

This situation is commonly referred to as the heavy hitters problem.

Solution 1 : Add a Queue in Between

Adds delay between the like action and the counter update (eventual consistency).

Each like request goes to the application server first.

Instead of directly updating the database, the server pushes the event into a message queue (e.g., Kafka, RabbitMQ, SQS).

A consumer service reads from the queue and batches or serializes the updates to the database.

Benefits:

Protects the database from being overwhelmed by concurrent writes.

Allows batching of increments, improving efficiency.

Queue acts as a buffer to absorb sudden spikes in traffic.

Trade-off:

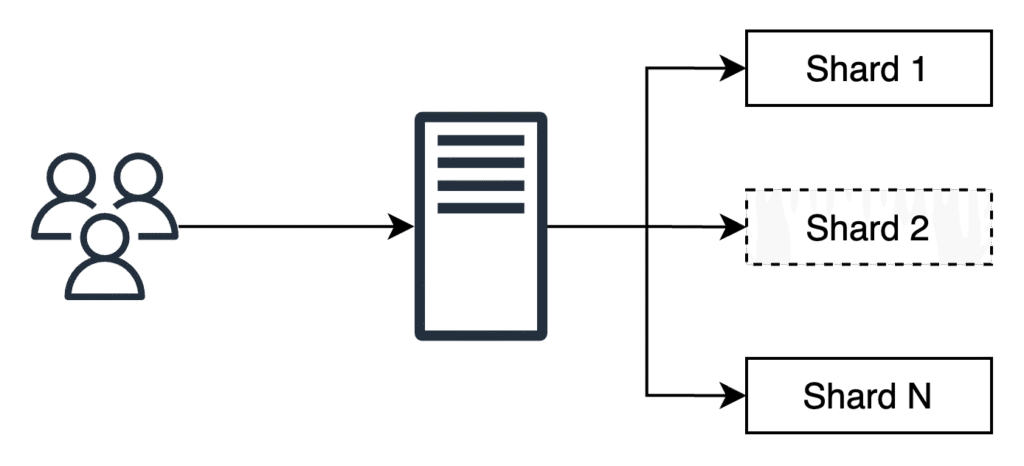

Solution 2 : Sharded Counter

Instead of maintaining a single counter row in the database (which quickly becomes a hotspot), the counter is split into multiple shards.

How it Works

- When a like request comes in, the application server:

- Randomly picks one shard (say, out of 100).

- Increments that shard’s counter instead of the global counter.

- We’ll use an appropriate value for N according to our needs.

API design

createCounter(counter_id, number_of_shards)

counter_id: Unique ID of the counter. The caller of this API can use a sequencer to get a unique identifier.number_of_shards: number of shards for the counter.

writeCounter(counter_id, action_type)

counter_id: Unique ID of the counter. The caller of this API can use a sequencer to get a unique identifier.action_type: increment or decrement

readCounter(counter_id)

counter_id: Unique ID of the counter. The caller of this API can use a sequencer to get a unique identifier.

Detailed Design

- How many shards should be created against each new tweet?

- How will the shard value be incremented for a specific tweet?

- What will happen in the system when read requests come from the end users?

The following is the list of main counters created against each new tweet:

- Tweet like counter

- Tweet reply counter

- Tweet retweet counter

- Tweet view counter in case a tweet contains video

How does the system decide the number of shards in each counter?

- Too few shards → high write contention, slow writes.

- Too many shards → high read overhead, as reads must aggregate from more shards across potentially distributed nodes.

Factors Influencing Shard Count

- Predicted Write Load

- Higher follower count = more expected likes/retweets.

- Example: Tweets from celebrities get more shards than tweets from regular users.

- Content Popularity Signals

- Tweets with trending hashtags may require more shards, as they are likely to attract massive engagement.

- Activity Pattern (Long Tail)

- Many human activities show a burst of engagement early on, followed by a sharp decline.

- After the peak, fewer shards may be needed.

- Prediction Uncertainty

- Initial estimates can be wrong; hence, the system must adapt dynamically.

Dynamic Shard Scaling

To handle unpredictable workloads, the system should expand or shrink shards dynamically:

- Expand: Add more shards when write requests spike.

- Shrink: Retire shards when engagement dies down to save resources.

Monitoring & Feedback Mechanism

- Continuously monitor write load per shard.

- Use load balancers to route requests evenly across shards.

- Feedback loop helps in:

- Deciding when to add shards.

- Deciding when to merge/close shards.

- Ensuring near-optimal resource utilization while maintaining user experience.

What happens when a user with just a few followers has a post go viral on Twitter?

The system needs to detect such cases where a counter unexpectedly starts getting very high write traffic. We’ll dynamically increase the number of shards of the affected counter to mitigate the situation.

Burst of writes requests

When millions of users interact with a celebrity’s tweet, the system experiences a massive burst of write requests. Each write request must be directed to one of the available shards for that tweet’s counter.

A key challenge: How should the system select shards efficiently across multiple nodes?

Shard Selection Approaches

1. Round-Robin Selection

- Requests are distributed across shards sequentially:

shard_1 → shard_2 → ... → shard_100 → shard_1. - Advantages:

- Very simple to implement.

- Works well if all requests are similar in workload.

- Drawbacks:

- Ignores current load on shards → some may get overloaded while others are underutilized.

- A single shard can still become a bottleneck if multiple servers route requests to it at the same time.

2. Random Selection

- How it works: Each write request is assigned to a randomly selected shard.

- Advantages:

- Distributes load more evenly compared to round-robin (statistically).

- Still very simple to implement.

- Drawbacks:

- Like round-robin, it does not account for variable load across shards or nodes.

- Some shards can still become hotspots due to randomness.

3. Metrics-Based Selection

- How it works: A dedicated load balancer or controller monitors shard health (CPU usage, queue length, latency, throughput) and assigns requests to the least-loaded shard.

- Advantages:

- Adaptive to real-world load conditions.

- Ensures more balanced resource utilization.

- Reduces hotspots, leading to more predictable performance.

- Drawbacks:

- More complex to implement.

- Requires continuous monitoring and coordination between load balancer and shard nodes.

- Slight latency overhead in making load-aware routing decisions.

In practice, large-scale systems often start with random or round-robin for simplicity, then evolve to metrics-based load-aware shard selection as traffic scales and performance becomes critical.

Manage read requests

Managing Read Requests in Sharded Counters

- When a user sends a read request, the system must aggregate values from all shards of the counter.

- Doing this aggregation on every read leads to:

- Low read throughput (too many shard lookups).

- High read latency (especially across distributed nodes).

Strategy

- Instead of aggregating on every read, the system:

- Periodically sums shard values and stores the result in a cache.

- Reads are served from the cache for efficiency.

- Trade-off:

- Smaller accumulation interval = more accurate but higher overhead.

- Larger accumulation interval = less accurate but higher performance.

Use Case Where Sharded Counters (with Relaxed Consistency) Might Not Be Suitable

Sharded counters work best when eventual consistency is acceptable. They are not suitable when:

- Strong consistency is required.

- Example: Read-then-write operations, where a client first reads the exact current value before making a decision to update it.

- E.g., a payment system decrementing account balance (must be strictly accurate).

- E.g., inventory management where overselling stock must not occur.

- These cases require transactional support or strongly consistent counters, not sharded, eventually consistent ones.

Using sharded counters for the Top K problem

Example: Twitter Trends

- Millions of hashtags are used across tweets.

- To calculate trending topics (a Top-K problem), Twitter maintains sharded counters per hashtag.

- Shard count depends on factors like:

- The number of followers of the user who first used the hashtag.

- Regional popularity (hashtags tracked per location).

- Time window (e.g., last 24 hours).

Counters in Action

- Global Hashtag Counter – Total number of tweets with a hashtag worldwide.

- Region-wise Counters – Track hashtag popularity in specific locations.

- Example: If #NYC reaches 10,000 tweets in New York, it may appear in local trends.

- If hashtags cross thresholds globally, they may appear in worldwide trends.

- Time-windowed Counters – Ensure trends reflect recent activity (e.g., tweets in the last 24 hours).

Extending Top-K Beyond Hashtags

- Top-K Tweets in a Timeline – Based on factors like follower count, engagement (likes/retweets), recency, and sometimes promoted tweets.

- Sharded counters are used to maintain engagement counts efficiently for these tweets.

Placement of Sharded Counter

- Options include:

- On application servers.

- On dedicated counter nodes.

- At the edge (CDN nodes) to reduce latency.

- For Twitter, placing sharded counters near users helps handle heavy hitter and Top-K workloads efficiently.

Q1. Should we lock all shards of a counter before accumulating their values?

Answer:

No, we should not lock all shards during aggregation. Locking would create bottlenecks and reduce system scalability. Instead:

- Shard values are periodically aggregated and stored in a scalable datastore (e.g., Cassandra).

- Reads are served from these precomputed values (with acceptable staleness).

- This allows high read throughput without blocking ongoing writes.

- In-memory stores like Redis/Memcache hold mappings between tweets, counters, and their shards for fast access.

Key Insight:

Sharded counters work with an eventual consistency model. We rely on background aggregation + caching rather than strong locks.

Managing Reads with Sharded Counters

1. Storing Aggregated Counter Values

- Counters are periodically aggregated from shards and persisted in a scalable datastore like Cassandra.

- Cassandra maintains precomputed values for:

- Views

- Likes

- Comments

- Other engagement metrics

- These persisted values represent the last computed sum of all shards for each counter.

2. Serving Reads Efficiently

- When a user requests a timeline:

- The nearest server fetches values from Cassandra.

- These values are used to construct region-specific trends (e.g., Top K hashtags).

- Local Top-K lists are merged by the application server into a global Top-K list.

- Final results are sent to a cache (Redis/Memcache) for faster future access.

3. Metadata Storage for Sharded Counters

- Redis/Memcache maintains counter metadata to quickly map tweets → counters → shards:

- Tweet ID → Counter IDs (e.g., likes, replies, retweets).

- Counter ID → Shard list (all shards that maintain that counter).

- This metadata ensures fast routing of write requests to the right shard.

4. Write Flow

- Incoming write requests (likes, replies, etc.):

- Identify the correct counter via metadata.

- Counter randomly (or via metrics) selects a shard.

- Shard is updated in parallel with other shards.

5. Read Flow

- A periodic reduce step aggregates shard values → stores results in Cassandra.

- User reads are served from Cassandra (or Redis cache), not directly from shards.

- This avoids high-latency multi-shard lookups on every read.

Evaluate Requirement

Sharded counters improve system performance by ensuring high availability, scalability, and reliability, which are critical for large-scale applications like Twitter, YouTube, and Instagram.

Availability

- A single counter for likes, views, or replies creates a single point of failure.

- Sharded counters eliminate this risk by distributing the counter across multiple shards.

- Even if some shards fail or become slow, the system continues to serve requests.

- Result: Fault tolerance and continuous availability.

Scalability

- Sharded counters enable horizontal scaling.

- As traffic increases, the system can add more shards across additional nodes.

- Increased shards → higher throughput → better performance under heavy load.

- Result: Elastic scaling to match demand.

Reliability

- Massive write requests are distributed across shards, reducing bottlenecks.

- Each request is immediately assigned to a shard → no blocking queues.

- Counters are periodically aggregated and stored in stable storage (e.g., Cassandra).

- This ensures durability and consistency across failures.

- Result: High reliability and better hit ratios.

Conclusion

Sharded counters have become a key component in modern large-scale systems. They effectively handle:

- Heavy hitters problem (hotspot counters with millions of writes).

- Top-K problem (ranking hashtags, tweets, or posts efficiently).

By delivering high availability, scalability, and reliability, sharded counters significantly enhance the performance and resilience of giant services in today’s internet ecosystem.